Policy or Value ? Loss Function and Playing Strength in AlphaZero-like Self-play

Por um escritor misterioso

Last updated 18 outubro 2024

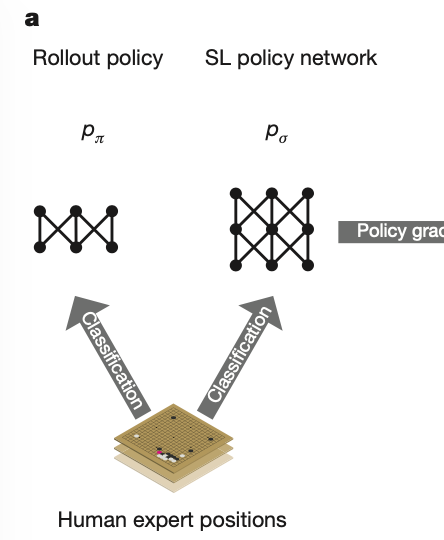

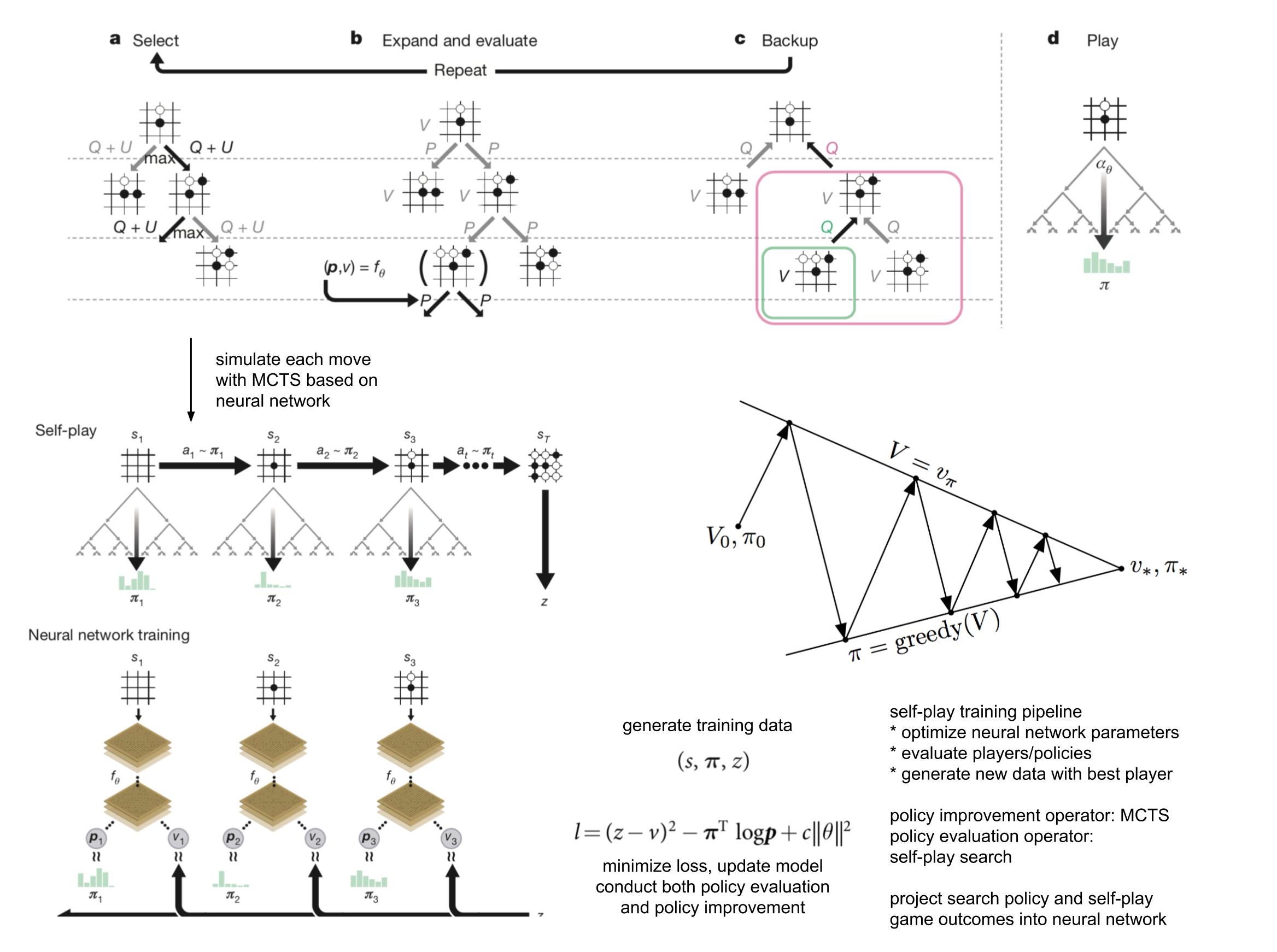

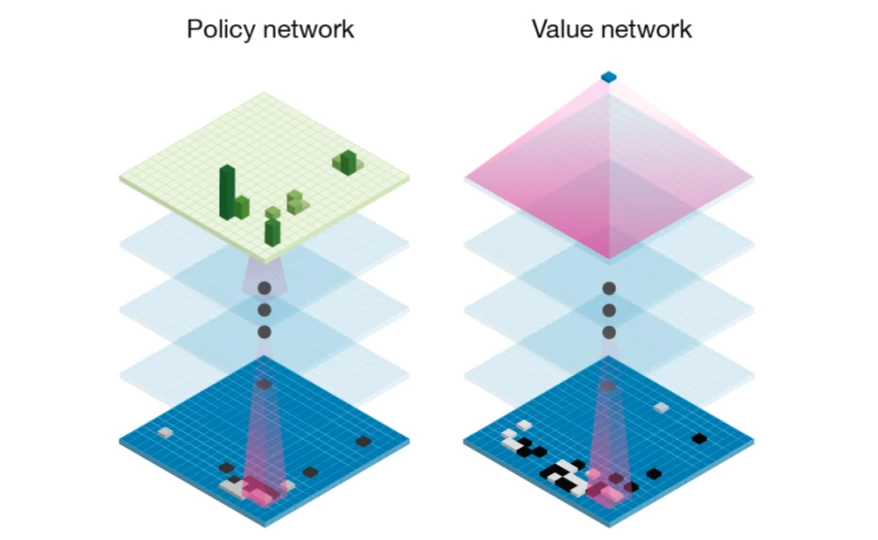

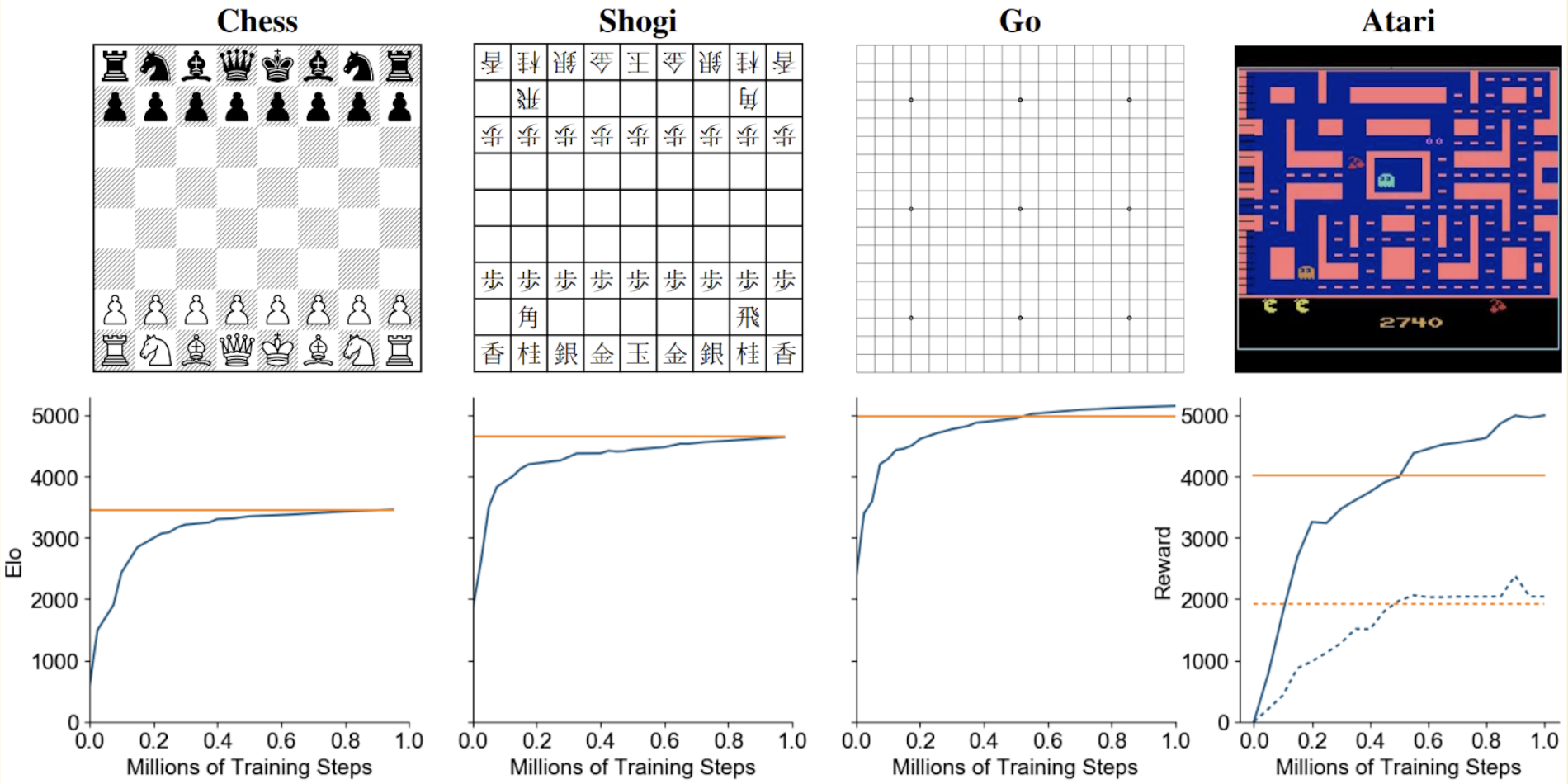

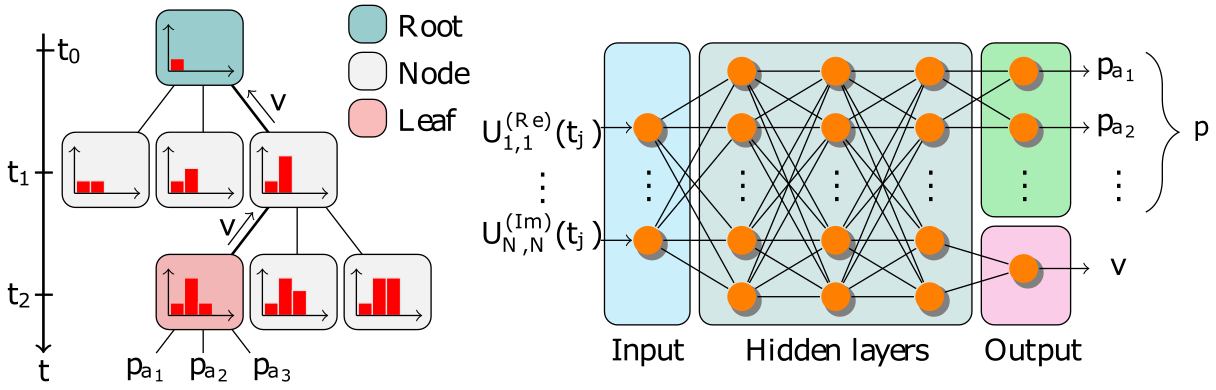

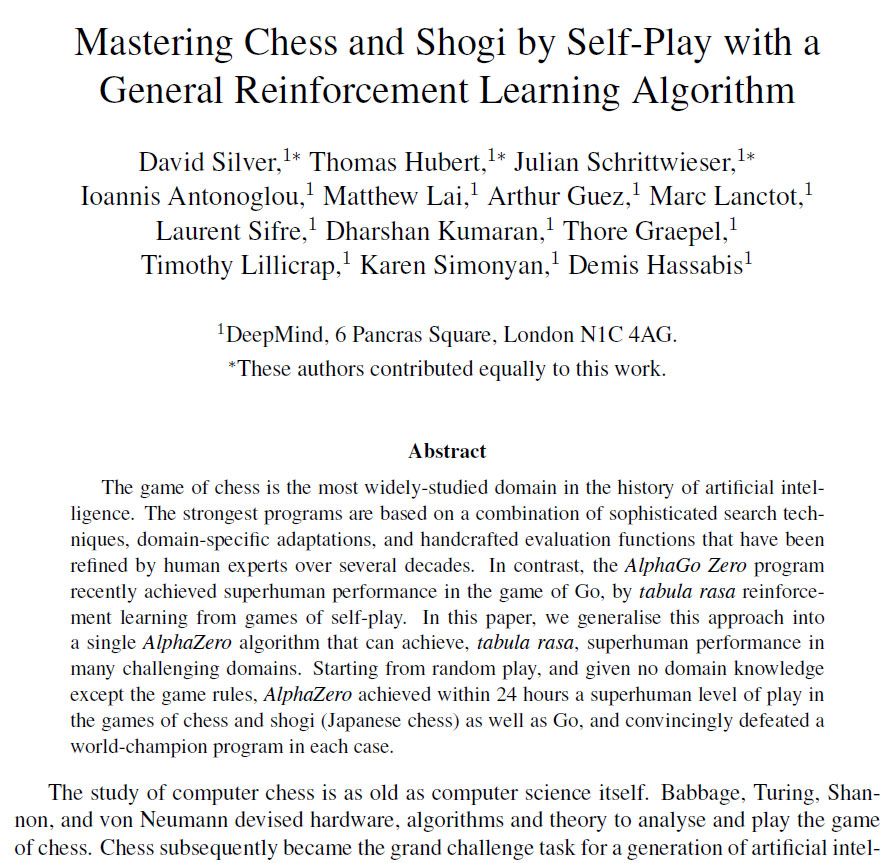

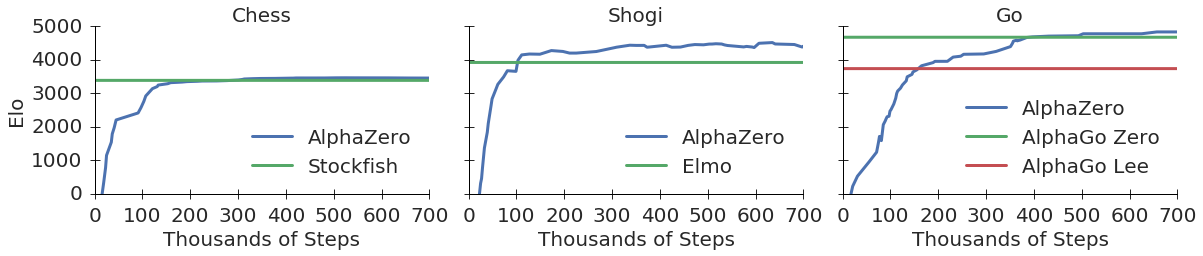

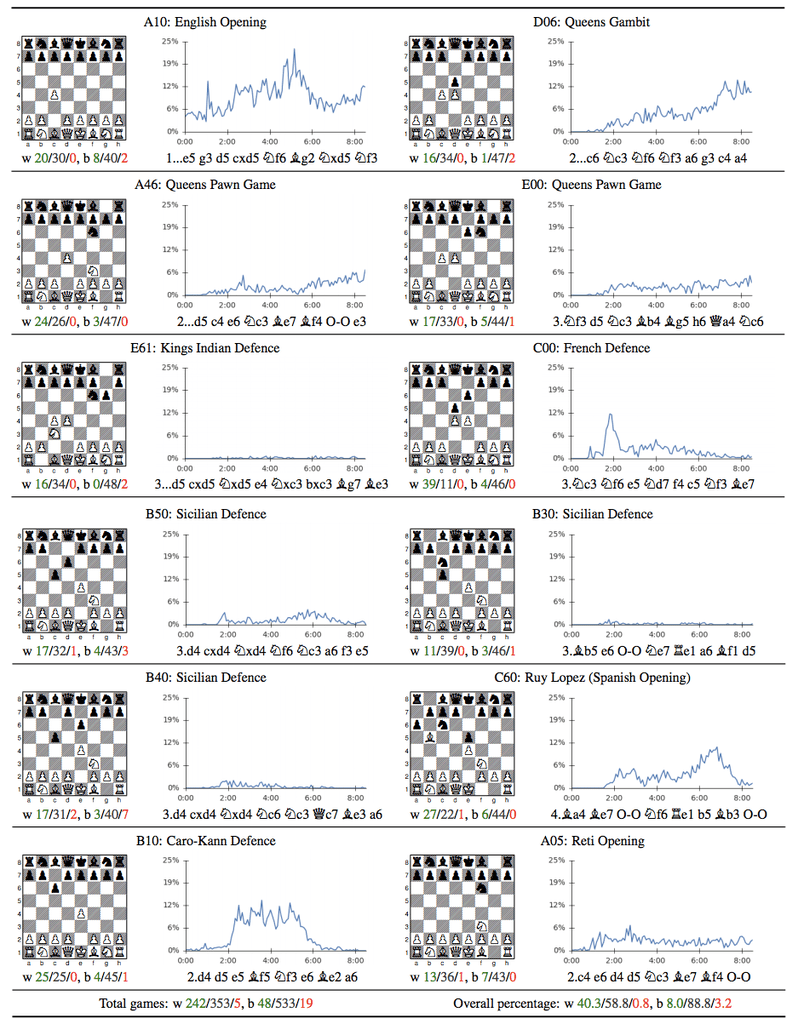

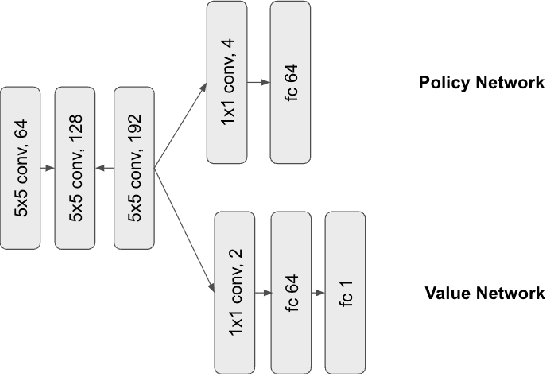

Results indicate that, at least for relatively simple games such as 6x6 Othello and Connect Four, optimizing the sum, as AlphaZero does, performs consistently worse than other objectives, in particular by optimizing only the value loss. Recently, AlphaZero has achieved outstanding performance in playing Go, Chess, and Shogi. Players in AlphaZero consist of a combination of Monte Carlo Tree Search and a Deep Q-network, that is trained using self-play. The unified Deep Q-network has a policy-head and a value-head. In AlphaZero, during training, the optimization minimizes the sum of the policy loss and the value loss. However, it is not clear if and under which circumstances other formulations of the objective function are better. Therefore, in this paper, we perform experiments with combinations of these two optimization targets. Self-play is a computationally intensive method. By using small games, we are able to perform multiple test cases. We use a light-weight open source reimplementation of AlphaZero on two different games. We investigate optimizing the two targets independently, and also try different combinations (sum and product). Our results indicate that, at least for relatively simple games such as 6x6 Othello and Connect Four, optimizing the sum, as AlphaZero does, performs consistently worse than other objectives, in particular by optimizing only the value loss. Moreover, we find that care must be taken in computing the playing strength. Tournament Elo ratings differ from training Elo ratings—training Elo ratings, though cheap to compute and frequently reported, can be misleading and may lead to bias. It is currently not clear how these results transfer to more complex games and if there is a phase transition between our setting and the AlphaZero application to Go where the sum is seemingly the better choice.

Reimagining Chess with AlphaZero, February 2022

Policy or Value ? Loss Function and Playing Strength in AlphaZero

The Evolution of AlphaGo to MuZero, by Connor Shorten

Why Artificial Intelligence Like AlphaZero Has Trouble With the

Reinforcement learning is all you need, for next generation

MuZero Intuition

Does the neural net of AlphaZero only evaluate the score of a

Policy or Value ? Loss Function and Playing Strength in AlphaZero

The Data Problem III: Machine Learning Without Data - Synthesis AI

Recomendado para você

-

AlphaZero learns to solve quantum problems - ΑΙhub18 outubro 2024

AlphaZero learns to solve quantum problems - ΑΙhub18 outubro 2024 -

Leela Chess Zero: AlphaZero for the PC18 outubro 2024

Leela Chess Zero: AlphaZero for the PC18 outubro 2024 -

AlphaZero Explained18 outubro 2024

AlphaZero Explained18 outubro 2024 -

AlphaZero defeats Stockfish: Quick thoughts – Pertinent Observations18 outubro 2024

AlphaZero defeats Stockfish: Quick thoughts – Pertinent Observations18 outubro 2024 -

GitHub - AlSaeed/AlphaZero: An Implementation of the AlphaZero Paper18 outubro 2024

-

AlphaZero Gomoku: Paper and Code - CatalyzeX18 outubro 2024

AlphaZero Gomoku: Paper and Code - CatalyzeX18 outubro 2024 -

Acquisition of Chess Knowledge in AlphaZero – arXiv Vanity18 outubro 2024

Acquisition of Chess Knowledge in AlphaZero – arXiv Vanity18 outubro 2024 -

AlphaZero: DeepMind's New Chess AI18 outubro 2024

AlphaZero: DeepMind's New Chess AI18 outubro 2024 -

Diversifying AI: Towards Creative Chess with AlphaZero18 outubro 2024

Diversifying AI: Towards Creative Chess with AlphaZero18 outubro 2024 -

Training AlphaZero for 700,000 steps. Elo ratings were computed from18 outubro 2024

Training AlphaZero for 700,000 steps. Elo ratings were computed from18 outubro 2024

você pode gostar

-

ofensa nem existe e eu te provo usando o messi careca #soujao #ofensa18 outubro 2024

-

Vestido Infantil Lilás Princesa Sofia Festa Social Luxo18 outubro 2024

Vestido Infantil Lilás Princesa Sofia Festa Social Luxo18 outubro 2024 -

Hora de aventura,fiona e cake - Fionna e Cake18 outubro 2024

-

Sea of Stars review --- A solstice celebration — GAMINGTREND18 outubro 2024

Sea of Stars review --- A solstice celebration — GAMINGTREND18 outubro 2024 -

Assistir Anime Kuroko no Basket: Baka ja Katenai no yo! Legendado - Animes Órion18 outubro 2024

Assistir Anime Kuroko no Basket: Baka ja Katenai no yo! Legendado - Animes Órion18 outubro 2024 -

Assassin's Creed Rogue - PC18 outubro 2024

-

Jogo gacha vida hoodies kawaii roupas moda feminina all-match gacha vida fora do ombro camisola femme menina mulher roupas - AliExpress18 outubro 2024

Jogo gacha vida hoodies kawaii roupas moda feminina all-match gacha vida fora do ombro camisola femme menina mulher roupas - AliExpress18 outubro 2024 -

Shokugeki no Souma : San no Sara18 outubro 2024

Shokugeki no Souma : San no Sara18 outubro 2024 -

Download Car Parking Multiplayer (Mod Money) 4.8.11.5 APK For18 outubro 2024

Download Car Parking Multiplayer (Mod Money) 4.8.11.5 APK For18 outubro 2024 -

Anime DVD Hataraku Maou-sama! (The Devil Is A Part-Timer!) Season 2 Vol.1-12 En18 outubro 2024

Anime DVD Hataraku Maou-sama! (The Devil Is A Part-Timer!) Season 2 Vol.1-12 En18 outubro 2024